Content Spotlight: High Performance Computing and GENE-3D

Written by Marion E. Smedberg

This article constitutes the third installment of the “Content Spotlight” series, where we focus on a particular topic and conduct a brief interview with an expert in the field. The purpose of these Spotlights is to generate a collection of the most important fusion topics and advances, as well as how these topics shape the fusion world today, as a unique resource for fusion students.

High Performance Computing (HPC) has been driving advances in science, technology, medicine, and many other fields since its beginnings in the 1960s. After some years of developing the technology, the Cray-1 supercomputer debuted in 1975 with a processing capability of 160 megaFLOPS. (FLOPS stands for Floating Point Operations Per Second, which is a measure of how many calculations a computer can perform. A computer at 10 FLOPS can perform roughly 10 calculations per second. A megaFLOPS is one million, or 106, FLOPS.) It’s expected that systems coming online in 2021 will break the "exascale" barrier, achieving exaFLOPS performance (exaFLOPS means one billion billion, or 1018, FLOPS).

HPC plays an important role in the field of nuclear fusion. Supercomputers all over the world are used to simulate plasma-wall interactions, plasma turbulence, neutron loading, and optimization problems such as the one undertaken to design the stellarator W7-X. How has this role evolved over time, from the emergence of supercomputing until now? How will this role change in the exascale era? We asked Frank Jenko, head of the Tokamak Theory department at IPP Garching and lead developer of the GENE code.

To set the stage, HPC use in fusion research has come a long way since HPC’s beginnings in the 60s. What were the first uses of HPC in fusion research, and how has this evolved over the years?

HPC has always been extremely important for fusion research, given that the fundamental equations (kinetic or fluid) as well as many physical phenomena (like turbulence) are inherently nonlinear, limiting the capabilities of analytical theory. Initially, the focus was on very simple, highly idealized models, since they were more tractable with the HPC resources of the day. With time, the models became more and more comprehensive and realistic, however, allowing for quantitative comparisons with experimental measurements. This happened first in the context of areas like linear magnetohydrodynamics (MHD) and neoclassical transport, which tend to be more accessible to computation overall. Later, the focus shifted to more complex issues like turbulent transport and nonlinear MHD. More recently, we have come to realize that many physical processes in fusion plasmas that have been investigated in isolation over many years are actually deeply connected, say the physics of energetic particles and turbulent transport. So we are entering the multi-physics, multi-scale era of fusion simulation.

Among the various ways fusion researchers use HPC is for modelling plasma turbulence. Can you explain what makes this application complex, yet worthwhile?

Turbulence is widely considered one of the most important and fascinating unsolved problems in physics. It is a paradigmatic example of nonlinear dynamics in complex systems, involving a continuous exchange of energy between a very large number of degrees of freedom and combining elements of chaos and order in interesting ways. Moreover, turbulence connects fundamental questions in theoretical physics and mathematics with a range of neighboring disciplines, from astrophysics to biophysics. At the same time, turbulence is of immense practical importance in numerous application areas, including weather prediction and combustion. In the context of fusion research, turbulence sets the global energy confinement time, which is of course a key figure of merit of any magnetic confinement fusion device. Therefore, it is our aim to understand, predict, and control turbulent transport, and to study its interactions with other physical processes, like MHD instabilities and edge physics. A predictive capability in the area of plasma turbulence constitutes a key element of a future virtual fusion plasma.

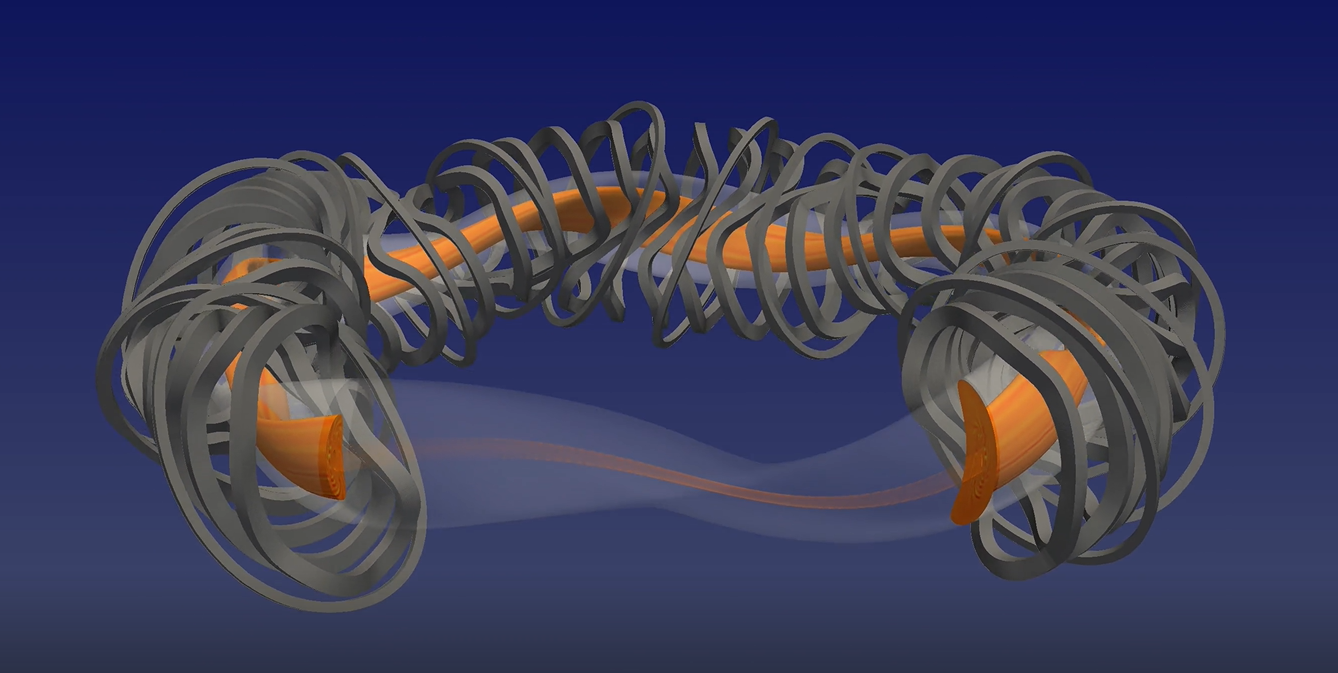

You’ve kindly provided us with a video of a plasma turbulence simulation from GENE-3D, one of the recent gyrokinetic codes developed in your group. (To view the video and its description, click here.) What makes this code and this simulation unique, and how did HPC enable this?

Plasma turbulence simulations are based on the gyrokinetic equations. First attempts to solve these equations on HPC systems, as a proof-of-principle, were undertaken in the 80s and 90s, based on a long list of simplifying assumptions. At this stage, the emphasis was mainly on understanding the fundamental character of plasma turbulence. Starting around 2000, the simulations gradually became more realistic, providing quantitative results which can be compared to experimental findings. The GENE family of codes played an important role in this context. The first version of GENE used a simple flux-tube geometry, minimizing the computational cost. Later, full-volume simulations became possible, but only for axisymmetric devices, i.e., tokamaks. Several years ago, a full-flux-surface version of GENE was developed and applied to stellarators. And finally, in 2020, after a multi-year code development effort, we were able to arrive at GENE-3D, which allows for full-volume simulations for stellarators, including important physical effects like magnetic field fluctuations and collisions. First applications to Wendelstein 7-X have been initiated, enabled by state-of-the-art HPC systems. These systems are vital in allowing us to accurately capture the turbulent dynamics in these complex geometries.

Finally, a new era of HPC, namely the exascale era, is due to begin this year. In your opinion, what avenues of fusion research will this open, and what benefits could this have for the fusion field? What will HPC not be capable of?

The prospects of exascale computing are very intriguing for fusion research. For the first time, we will be able to accurately capture essential aspects of fusion plasmas based on first-principles models, including various interactions between different physical processes. This development will allow us to become truly predictive, extrapolating from existing devices and plasma regimes to future ones with a high level of confidence. Needless to say that such a capability will greatly facilitate the development and optimization of next-step devices and future power plants. While a full-blown virtual tokamak or stellarator may be out of reach for quite some time, critical aspects of fusion physics, from heat and particle exhaust to the avoidance and control of disruptions, will greatly benefit from such efforts. Exascale computing is therefore viewed by many as a likely game changer for fusion research, offering new pathways to guiding and accelerating it. It is the right new "technology" at the right time, promising exciting new R&D opportunities.

More information about GENE-3D, and many other resources, are available on the FuseNet website Educational Materials page.